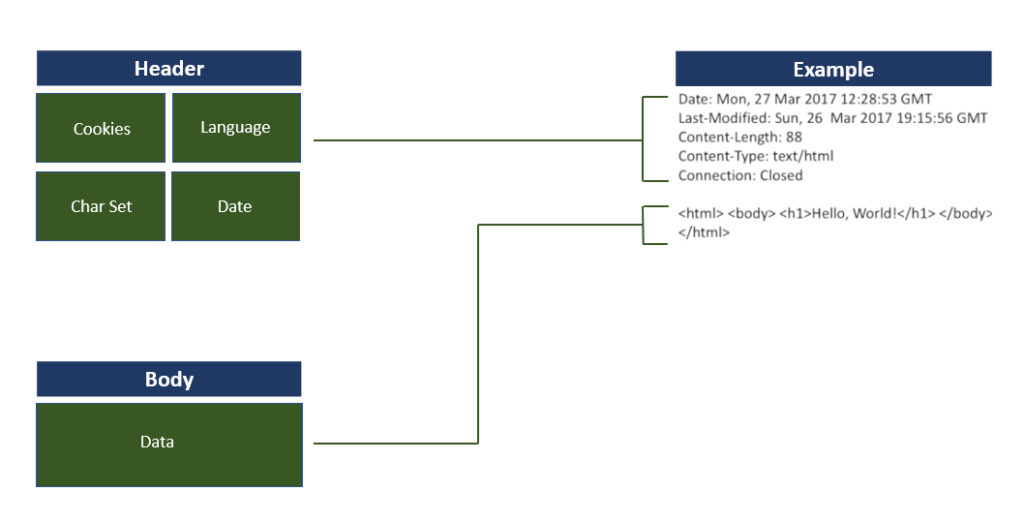

Wired as well as wireless networks suffer from the problem of network latency that leads to delays in packet delivery from source to destination. Each request from the client carries a set of headers that communicate relevant information to the server. The server, in turn, responds with a set of appropriate response headers that contains information pertaining to the size, file type, date, etc.

Up until HTTP/1.x, these headers were sent over the wire repeatedly for every request leading to a lot of duplicate data being sent uncompressed and causing inefficient use of bandwidth and introducing delays in page load time. We live in a world where there is a need for instant access to information without browsers taking their own sweet time to render pages. For network operators, it is of utmost importance to provide a high quality of service to retain and attract customers as this translates into a higher average revenue per user.

What is Header Compression?

Compressing headers reduces their size in terms of numbers of bytes that are transmitted during the connection. Consider his example: Todd is visiting an e-commerce website to purchase a gift. On a low bandwidth network, especially one not using header compression, the response time from the server is longer, and the website renders slowly at Todd’s end.

Using HTTP/2 which deploys the HPACK format for header compression, the pages will load faster with better interactive response time. The header needs to be sent only once during the entire connection leading to a significant decrease in the packet header overhead.

With the rise of mobile, along with more complex websites that need more and more requests to render a page, compressing headers reduces the overhead. It also improves transfer speed and bandwidth utilization.

Purchase a GeoTrust SSL Certificate & Save Up to 75%

We offer the best discount on all types of GeoTrust SSL Certificates, which includes DV SSL, EV SSL, Wildcard SSL, and Multi-Domain SSL Certificates.

Problems with earlier protocols: HTTP/1.1 and SPDY

- In HTTP/1.1 headers in every request are sent uncompressed on the network. However, schemes such as gzip or Deflate are used for content encoding.

| Request sent via HTTP/1.1 | Request sent via HTTP/2 |

| GET /index.html HTTP/1.1 Host: www.site.com Referer:https://www.site.com/ Accept-Encoding:gzip |

:method: GET :scheme: https :host: www.site.com :path: /index.html referer: https://www.site.com/ accept-encoding: gzip |

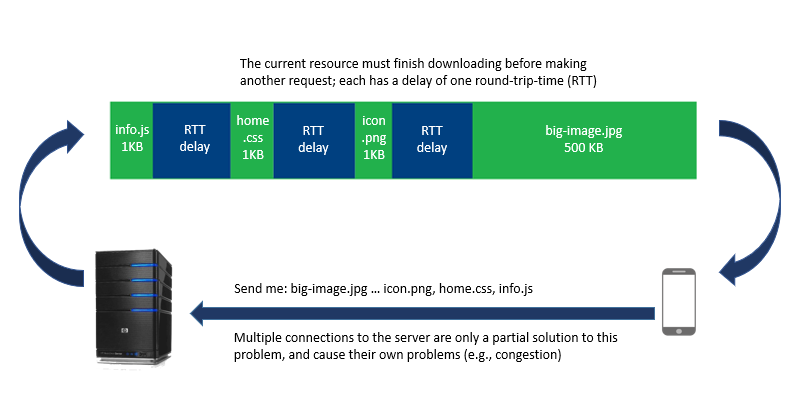

- Head-of-Line Blocking is a major problem with HTTP/1.1 where subsequent packets get blocked by the first packet in line since it allows a limited number of connections to the server.

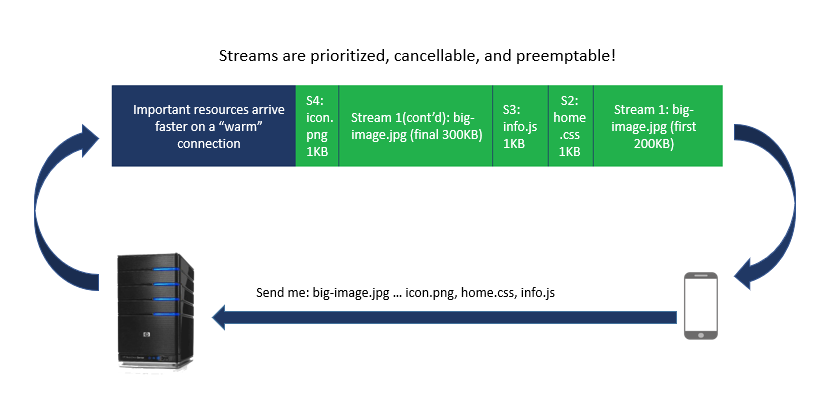

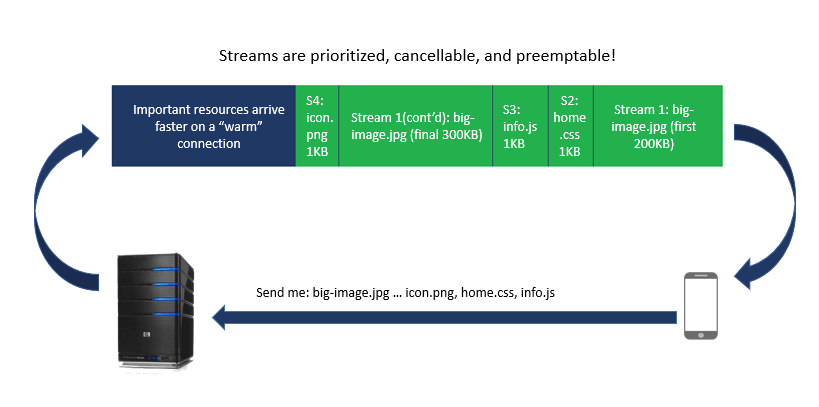

- HTTTP/2 solves the HOL Blocking problem with multiplexing that uses streams which can be prioritized.

- Google’s SPDY came up with a new header compression algorithm using a pre-set dictionary, dynamic Huffman codes, and string matching. The issue was it used Deflate and was found to be vulnerable to the CRIME attack. Via this attack, a malicious user can extract secret authentication cookies from compressed headers. Most edge networks disabled header compression due to CRIME that could result in session hijacking.

- HTTP/2 introduced a header compression algorithm, HPACK, that is considered to be safe against attacks such as CRIME.

HTTP/2: HPACK Compression

- HPACK does not use dynamic Huffman codes or partial backward string matches; instead, it uses three other methods of compression:

- Static Dictionary – A dictionary of 61 commonly used header fields, some with predefined values. It is defined by the protocol and contains standard phrases [name: value pairs] that every HTTP connection uses.

- Dynamic Dictionary – It is empty at the start of the communication but gets filled later with exchanged values. It contains a list of actual headers being used. This dictionary has limited size, and when new entries are added, old entries might be evicted [FIFO].

- Huffman Encoding – A static Huffman code is used to encode any string: name or value which reduces the size. Indexed list of previously transferred values enables us to reconstruct the full header keys and values.

- Each HTTP transfer carries a set of headers that have information with regards to the resource and its properties. In HTTP/1.x this metadata is transferred in plain text and adds an overhead of 500-800 bytes per request and more if cookies are being used. HTTP/2’s HPACK algorithm compresses request and response metadata using Huffman encoding that results in an average reduction of 30% in header size.

- A proof of concept done by Cloudflare on its network found a 53% reduction in ingress traffic with HTTP/2’s HPACK and a 1.4% savings on egress traffic.

- Three key benefits of HPACK:

- Resilient against compression based attacks such as CRIME

- Static Huffman code allows encoding large headers by using binary codes

- Use of indexing allows frequently used headers to be sent as the index number for referencing instead of the entire literal string.

Huffman Encoding – How it Works

Let us consider an example to understand this algorithm. Suppose we want to encode the string “happy” using Huffman encoding:

1. Assign weights depending on character frequency

| Character | Frequency |

| h | 1 |

| a | 1 |

| p | 2 |

| y | 1 |

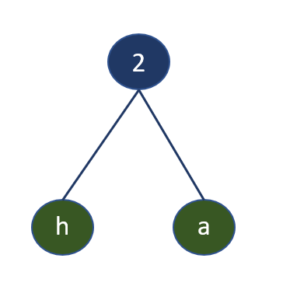

2. Pick out two characters with the smallest weights and combine them into a tree with a root whose weight equals the sum of their individual weights.

3. So now we have the following table:

| Nodes | Character | Weight | |

| Internal Node | h | a | 2 |

| p | 2 | ||

| y | 1 | ||

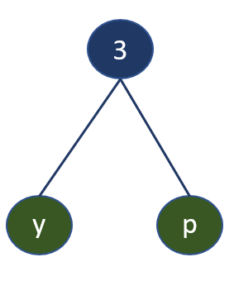

4. Add the two minimum weights again to get another root with the leaf having the least weight on the left.

p + y = 3:

5. So now we have the following table with a new internal node:

| Nodes | Character | Weight | |

| Internal Node | h | a | 2 |

| Internal Node | y | p | 3 |

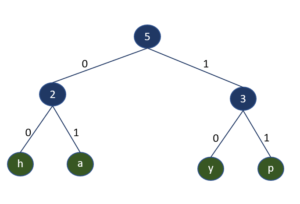

6. On repeating the previous steps, we get:

The left child is assigned 0, and the right is assigned the value 1. We traverse the tree starting from the root node to the leaf node to get the code.

| Character | Code |

| h | 00 |

| a | 01 |

| p | 11 |

| y | 10 |

How to Implement HTTP/2 on Your Website

To gain the benefits of HTTP/2 header compression, there are two basics steps you need to take:

- Install SSL. Firefox and Chrome only implement HTTP/2 over HTTPS so you need to get an SSL/TLS certificate and switch each page on your site to https.

- Enable HTTP/2. All popular web server software packages, including IIS, Apache, and NGINX, offer HTTP/2 support, you just need to enable it!